For the past couple of days, this article by John Carlisle has been causing a bit of a stir on Twitter. The author claims that he has found statistical evidence that a surprisingly high proportion of randomised controlled trials (RCTs) contain data patterns that cannot have arisen by chance. Given that he has previously been instrumental in uncovering high-profile fraud cases, and also that he used data from articles that are known to be fraudulent (because they have been retracted) to calibrate his method, the implication is that some percentage of these impossible numbers are the result of fraud. The title of the article is provocative, too: "Data fabrication and other reasons for non-random sampling in 5087 randomised, controlled trials in anaesthetic and general medical journals". So yes, there are other reasons, but the implication is clear (and has been picked up by the media): There is a bit / some / a lot of data fabrication going on.

Because I anticipate that Carlisle's article is going to have quite an impact once more of the mainstream media decide to run with it, I thought I'd spend some time trying to understand exactly what Carlisle did. This post is a summary of what I've found out so far. I offer it in the hope that it may help some people to develop their own understanding of this interesting forensic technique, and perhaps as part of the ongoing debate about the limitations of such "post publication analysis" techniques (which also include things such as GRIM and statcheck).

[[Update 2017-06-12 19:35 UTC: There is now a much better post about this study by F. Perry Wilson here.]]

How Carlisle identified unusual patterns in the articles

Carlisle's analysis method was relatively simple. He examined the baseline differences between the groups in the trial on (most of) the reported continuous variables. These could be straightforward demographics like age and height, or they could be some kind of physiological measure taken at the start of the trial. Because participants have been randomised into these groups, any difference between them is (by definition) due to chance. Thus, we would expect a certain distribution of the p values associated with the statistical tests used to compare the groups; specifically, we would expect to see a uniform distribution (all p values are equally likely when the null hypothesis is true).

Not all of the RCTs report test statistics and/or p values for the difference between groups at baseline (it is not clear what a p value would mean, given that the null hypothesis is known to be true), but they can usually be calculated from the reported means and standard deviations. In his article, Carlisle gives a list of the R modules and functions that he used to reconstruct the test statistics and perform his other analyses.

Carlisle's idea is that, if the results have been fabricated (for example, in an extreme case, if the entire RCT never actually took place), then the fakers probably didn't pay too much attention to the p values of the baseline comparisons. After all, the main reason for presenting these statistics in the article is to show the reader that your randomisation worked and that there were no differences between the groups on any obvious confounders. So most people will just look at, say, the ages of the participants, and see that in the experimental condition the mean was 43.31 with an SD of 8.71, and in the control condition it was 42.74 with an SD of 8.52, and think "that looks pretty much the same". With 100 people in each condition, the p value for this difference is about .64, but we don't normally worry about that very much; indeed, as noted above, many authors wouldn't even provide a p value here.

Now consider what happens when you have ten baseline statistics, all of them fabricated. People are not very good at making up random numbers, and the fakers here probably won't even realise that as well as inventing means and SDs, they are also making up p values that ought to be uniformly distributed. So it is quite possible that they will make up mean/SD combinations that imply differences between groups that are either collectively too small (giving large p values) or too large (giving small p values).

Reproducing Carlisle's analyses

In order to better understand exactly what Carlisle did, I decided to reproduce a few of his analyses. I downloaded the online supporting information (two Excel files which I'll refer to as S1 and S2, plus a very short descriptive document) here. The Excel files have one worksheet per journal with the worksheet named NEJM (corresponding to articles published in the New England Journal of Medicine) being on top when you open the file, so I started there.

Carlisle's principal technique is to take the p values from the various baseline comparisons and combine them. His main way of doing this is with Stouffer's formula, which is what I've used in this post. Here's how that works:

1. Convert each p value into a z score.

2. Sum the z scores.

3. If there are k scores, divide the sum of the z scores from step 2 by the square root of k.

4. Calculate the one-tailed p value associated with the overall z score from step 3.

In R, that looks like this. Note that you just have to create the vector with your p values (first line) and then you can just copy/paste the second line, which implements the entire Stouffer formula.

That is, the p value associated with the test that these five p values arose by chance is .02. Now if we start suggesting that something is untoward based on the conventional significance threshold of .05 we're going to have a lot of unhappy innocent people, as Carlisle notes in his article (more than 1% of the articles he examined had a p value < .00001), so we can probably move on from this example quite rapidly. On the other hand, if you have a pattern like this in your baseline t tests:

then things are starting to get interesting. Remember that the p values should be uniformly distributed from 0 to 1, so we might wonder why all but one of them are above .50.

In Carlisle's model, suspicious distributions are typically those with too many high p values (above 0.50) or too many low ones, which give overall p values that are close to either 0 or 1, respectively. For example, if you subtract all five of the p values in the first list I gave above from 1, you get this:

and if you subtract that final p value from 1, you get the value of 0.0211038 that appears above.

To reduce the amount of work I had to do, I chose three articles that were near the top of the NEJM worksheet in the S1 file (in principle, the higher up the worksheet the study is, the bigger the problems) and that had not too many variables in them. I have not examined any other articles at this time, so what follows is a report on a very small sample and may not be representative.

Article 1

The first article I chose was by Jonas et al. (2002), "Clinical Trial of Lamivudine in Children with Chronic Hepatitis B", which is on line 8 of the NEJM worksheet in the S1 file. The trial number (cell B8 of the NEJM worksheet in the S1 file) is given as 121, so I next looked for this number in column A of the NEJM worksheet of the S2 file and found it on lines 2069 through 2074. Those lines allow us to see exactly which means and SDs were extracted from the article and used as the basis for the calculations in the S1 file. (The degree to which Carlisle has documented his analyses is extremely impressive.) In this case, the means and SDs correspond to the three baseline variables reported in Table 1 of Jonas et al.'s article:

By combining the p values from these variables, Carlisle arrived at an overall inverted (see p. 4 of his article) p value of .99997992. This needs to be subtracted from 1 to give a conventional p value, which in this case is .00002. That would appear to be very strong evidence against the null hypothesis that these numbers are the product of chance. However, there are a couple of problems here.

First, Carlisle made the following note in the S1 file (cell A8):

Second, and much more importantly, the three baseline variables here are clearly not independent. The first set of numbers ("Total score") is merely the sum of the other two, and arguably these other two measures of liver deficiencies are quite likely to be related to one another. Even if we ignore that last point and only remove "Total score", considering the other two variables to be completely independent, the overall p value for this RCT would change from .00002 to .001.

Carlisle discusses the general issue of non-independence on p. 7 of his article, and in the quote above he actually noted that the liver function scores in Jonas et al. were correlated. That makes it slightly unfortunate that he didn't take some kind of measures to compensate for the correlation. Leaving the raw numbers in the S1 file as if the scores were uncorrelated meant that Jonas et al.'s article appeared to be the seventh most severely problematic article in NEJM.

(* Update 2017-06-08 10:07 UTC: In the first version of this post, I wrote "it is slightly unfortunate that [Carlisle] apparently didn't spot the problem in this case". This was unfair of me, as the quote from the S1 file shows that Carlisle did indeed spot that the variables were correlated.)

Article 2

Looking further down file S1 for NEJM articles with only a few variables, I came across Glauser et al. (2010), "Ethosuximide, Valproic Acid, and Lamotrigine in Childhood Absence Epilepsy" on line 12. This has just two baseline variables for participants' IQs measured with two different instruments. The trial number is 557, which leads us to lines 10280 through 10285 of the NEJM worksheet in the S2 file. Each of the two variables has three values, corresponding to the three treatment groups.

Carlisle notes in his article (p. 7) that the p value for the one-way ANOVA comparing the groups for the second variable is misreported. The authors stated that this value is .07, whereas Carlisle calculates (and I concur, using ind.twoway.second() from the rpsychi package in R) that this should be around .0000007. Combining this p value with the .47 from the first variable, Carlisle obtains an overall (conventional) p value of .0004 to test the null hypothesis that these group differences are the result of chance.

But it seems to me that there may be a more parsimonious explanation for these problems. The two baseline variables are both measures of IQ, and one would expect them to be correlated. Inspection of the group means in Glauser et al.'s Table 2 (a truncated version of which is show above) suggests that the value for the Lamotrigine group on the WPPSI measure is a lot lower than might be expected, given that this group scored slightly higher on the WISC measure. Indeed, when I replaced the value of 92.0 with 103.0, I obtained a p value for the one-way ANOVA of almost exactly .07. Of course, there is no direct evidence that 92.0 was the result of a finger slip (or, perhaps, copying the wrong number from a printed table), but it certainly seems like a reasonable possibility. A value of 96.0 instead of 92.0 would also give a p value close to .07.

Article 3

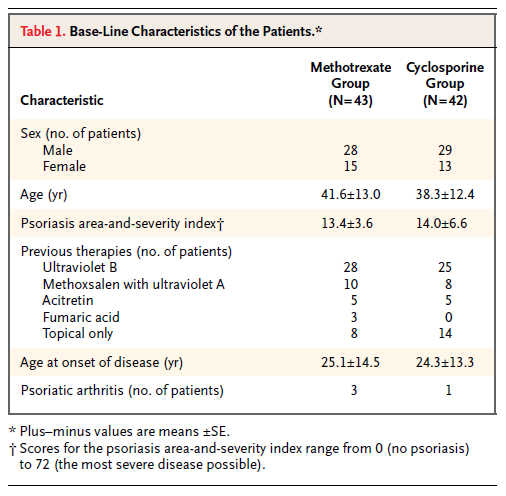

Continuing down the NEJM worksheet in the S1 file, I came to Heydendael et al. (2003) "Methotrexate versus Cyclosporine in Moderate-to-Severe Chronic Plaque Psoriasis" on line 33. Here there are three baseline variables, described on lines 3152 through 3157 of the NEJM worksheet in the S2 file. These variables turn out to be the patient's age at the start of the study, the extent of their psoriasis, and the age at which psoriasis first developed, as shown in Table 1, which I've reproduced here.

Carlisle apparently took the authors at their word that the numbers after the ± symbol were standard errors, as he seems to have converted them to standard deviations by multiplying them by the square root of the sample size (cells F3152 through F3157 in the S2 file). However, it seems clear that, at least in the case of the ages, the "SEs" can only have been SDs. The values calculated by Carlisle for the SDs are around 80, which is an absurd standard deviation for human ages; in contrast, the values ranging from 12.4 through 14.5 in the table shown above are quite reasonable as SDs. It is not clear whether the "SE" values in the table for the psoriasis area-and-severity index are in fact likely to be SDs, or whether Carlisle's calculated SD values (23.6 and 42.8, respectively) are more likely to be correct.

Carlisle calculated an overall p value of .005959229 for this study. Assuming that the SDs for the age variables are in fact the numbers listed as SEs in the above table, I get an overall p value of around .79 (with a little margin for error due to rounding error on the means and SDs, which are given to only one decimal place).

Conclusion

The above analyses show how easy it can be to misinterpret published articles when conducting systematic forensic analyses. I can't know what was going through Carlisle's mind when he was reading the articles that I selected to check, but having myself been through the exercise of reading several hundred articles over the course of a few evenings looking for GRIM problems, I can imagine that obtaining a full understanding of the relations between each of the baseline variables may not always have been possible.

I want to make it very clear that this post is not intended as a "debunking" or "takedown" of Carlisle's article, for several reasons. First, I could have misunderstood something about his procedure (my description of it in this post is guaranteed to be incomplete). Second, Carlisle has clearly put a phenomenal amount of effort—thousands of hours, I would guess—into these analyses, for which he deserves a vast amount of credit (and does not deserve to be the subject of nitpicking). Third, Carlisle himself noted in his article (p. 8) that is was inevitable that he had made a certain number of mistakes. Fourth, I am currently in a very similar line of business myself at least part of the time, with GRIM and the Cornell Food and Brand Lab saga, and I know that I have made multiple errors, sometimes in public, where I was convinced that I had found a problem and someone gently pointed out that I had missed something (and that something was usually pretty straightforward). I should also point out that the quotes around the word "bombshell" in the title of this post are not meant to belittle the results of Carlisle's article, but merely to indicate that this is how some media outlets will probably refer to it (using a word that I try to avoid like the plague).

If I had a takeaway message, I think it would be that this technique of examining the distribution of p values from baseline variable comparisons is likely to be less reliable as a predictor of genuine problems (such as fraud) when the number of variables is small. In theory the overall probability that the results are legitimate and correctly reported is completely taken care of by the p values and Stouffer's formula for combining them, but in practice when there are only a few variables it only takes a small issue—such as a typo, or some unforeseen non-independence—to distort the results and make it appear as if there is something untoward when there probably isn't.

I would also suggest that when looking for fabrication, clusters of small p values—particularly those below .05—may not be as good an indication as clusters of large p values. This is just a continuation of my argument about the p value of .07 (or .0000007) from Article 2, above. I think that Carlisle's technique is very clever and will surely catch many people who do not realise that their "boring" numbers showing no difference will produce p values that need to follow a certain distribution, but I question whether many people are fabricating data that (even accidentally) shows a significant baseline difference between groups, when such differences might be likely to attract the attention of the reviewers.

To conclude: One of the reasons that science is hard is that it requires a lot of attention to detail, which humans are not always very good at it. Even people who are obviously phenomenally good at it (including John Carlisle!) make mistakes. We learned when writing our GRIM article what an error-prone process the collection and analysis of data can be, whether this be empirical data gathered from subjects (some of the stories about how their data were collected or curated that were volunteered by the authors whom we contacted to ask for their datasets were both amusing and slightly terrifying) or data extracted from published articles for the purposes of meta-analysis or forensic investigation. I have a back burner project to develop a "data hygiene" course, and hope to get round to actually writing and giving it one day!

Because I anticipate that Carlisle's article is going to have quite an impact once more of the mainstream media decide to run with it, I thought I'd spend some time trying to understand exactly what Carlisle did. This post is a summary of what I've found out so far. I offer it in the hope that it may help some people to develop their own understanding of this interesting forensic technique, and perhaps as part of the ongoing debate about the limitations of such "post publication analysis" techniques (which also include things such as GRIM and statcheck).

[[Update 2017-06-12 19:35 UTC: There is now a much better post about this study by F. Perry Wilson here.]]

How Carlisle identified unusual patterns in the articles

Carlisle's analysis method was relatively simple. He examined the baseline differences between the groups in the trial on (most of) the reported continuous variables. These could be straightforward demographics like age and height, or they could be some kind of physiological measure taken at the start of the trial. Because participants have been randomised into these groups, any difference between them is (by definition) due to chance. Thus, we would expect a certain distribution of the p values associated with the statistical tests used to compare the groups; specifically, we would expect to see a uniform distribution (all p values are equally likely when the null hypothesis is true).

Not all of the RCTs report test statistics and/or p values for the difference between groups at baseline (it is not clear what a p value would mean, given that the null hypothesis is known to be true), but they can usually be calculated from the reported means and standard deviations. In his article, Carlisle gives a list of the R modules and functions that he used to reconstruct the test statistics and perform his other analyses.

Carlisle's idea is that, if the results have been fabricated (for example, in an extreme case, if the entire RCT never actually took place), then the fakers probably didn't pay too much attention to the p values of the baseline comparisons. After all, the main reason for presenting these statistics in the article is to show the reader that your randomisation worked and that there were no differences between the groups on any obvious confounders. So most people will just look at, say, the ages of the participants, and see that in the experimental condition the mean was 43.31 with an SD of 8.71, and in the control condition it was 42.74 with an SD of 8.52, and think "that looks pretty much the same". With 100 people in each condition, the p value for this difference is about .64, but we don't normally worry about that very much; indeed, as noted above, many authors wouldn't even provide a p value here.

Now consider what happens when you have ten baseline statistics, all of them fabricated. People are not very good at making up random numbers, and the fakers here probably won't even realise that as well as inventing means and SDs, they are also making up p values that ought to be uniformly distributed. So it is quite possible that they will make up mean/SD combinations that imply differences between groups that are either collectively too small (giving large p values) or too large (giving small p values).

Reproducing Carlisle's analyses

In order to better understand exactly what Carlisle did, I decided to reproduce a few of his analyses. I downloaded the online supporting information (two Excel files which I'll refer to as S1 and S2, plus a very short descriptive document) here. The Excel files have one worksheet per journal with the worksheet named NEJM (corresponding to articles published in the New England Journal of Medicine) being on top when you open the file, so I started there.

Carlisle's principal technique is to take the p values from the various baseline comparisons and combine them. His main way of doing this is with Stouffer's formula, which is what I've used in this post. Here's how that works:

1. Convert each p value into a z score.

2. Sum the z scores.

3. If there are k scores, divide the sum of the z scores from step 2 by the square root of k.

4. Calculate the one-tailed p value associated with the overall z score from step 3.

In R, that looks like this. Note that you just have to create the vector with your p values (first line) and then you can just copy/paste the second line, which implements the entire Stouffer formula.

plist = c(.95, .84, .43, .75, .92)

1 - pnorm(sum(sapply(plist, qnorm))/sqrt(length(plist)))

[1] 0.02110381

That is, the p value associated with the test that these five p values arose by chance is .02. Now if we start suggesting that something is untoward based on the conventional significance threshold of .05 we're going to have a lot of unhappy innocent people, as Carlisle notes in his article (more than 1% of the articles he examined had a p value < .00001), so we can probably move on from this example quite rapidly. On the other hand, if you have a pattern like this in your baseline t tests:

plist = c(.95, .84, .43, .75, .92, .87, .83, .79, .78, .84)

1 - pnorm(sum(sapply(plist, qnorm))/sqrt(length(plist)))

[1] 0.00181833

then things are starting to get interesting. Remember that the p values should be uniformly distributed from 0 to 1, so we might wonder why all but one of them are above .50.

In Carlisle's model, suspicious distributions are typically those with too many high p values (above 0.50) or too many low ones, which give overall p values that are close to either 0 or 1, respectively. For example, if you subtract all five of the p values in the first list I gave above from 1, you get this:

plist = c(.05, .16, .57, .25, .08)

1 - pnorm(sum(sapply(plist, qnorm))/sqrt(length(plist)))

[1] 0.9788962

and if you subtract that final p value from 1, you get the value of 0.0211038 that appears above.

To reduce the amount of work I had to do, I chose three articles that were near the top of the NEJM worksheet in the S1 file (in principle, the higher up the worksheet the study is, the bigger the problems) and that had not too many variables in them. I have not examined any other articles at this time, so what follows is a report on a very small sample and may not be representative.

Article 1

The first article I chose was by Jonas et al. (2002), "Clinical Trial of Lamivudine in Children with Chronic Hepatitis B", which is on line 8 of the NEJM worksheet in the S1 file. The trial number (cell B8 of the NEJM worksheet in the S1 file) is given as 121, so I next looked for this number in column A of the NEJM worksheet of the S2 file and found it on lines 2069 through 2074. Those lines allow us to see exactly which means and SDs were extracted from the article and used as the basis for the calculations in the S1 file. (The degree to which Carlisle has documented his analyses is extremely impressive.) In this case, the means and SDs correspond to the three baseline variables reported in Table 1 of Jonas et al.'s article:

By combining the p values from these variables, Carlisle arrived at an overall inverted (see p. 4 of his article) p value of .99997992. This needs to be subtracted from 1 to give a conventional p value, which in this case is .00002. That would appear to be very strong evidence against the null hypothesis that these numbers are the product of chance. However, there are a couple of problems here.

First, Carlisle made the following note in the S1 file (cell A8):

Patients were randomly assigned. Variables correlated (histologic scores). Supplementary baseline data reported as median (range). p values noted by authors.But in cells O8, P8, and Q8, he gives different p values from those in the article, which I presume he calculated himself. After subtracting these p values from 1 (to take into account the inversion of the input p values that Carlisle describes on p. 4 of his article), we can see that the third p value in cell Q8 is rather different (.035 has become approximately .10). It appears that the original p values were derived from a non-parametric test which would be impossible to reproduce without the data, so presumably Carlisle assumed a parametric model (for example, p values can be calculated for a t test from the mean, SD, and sample sizes of the two groups). Note that in this case, the difference in p values actually works against a false positive, but the general point is that not all p value analyses can be reproduced from the summary statistics.

Second, and much more importantly, the three baseline variables here are clearly not independent. The first set of numbers ("Total score") is merely the sum of the other two, and arguably these other two measures of liver deficiencies are quite likely to be related to one another. Even if we ignore that last point and only remove "Total score", considering the other two variables to be completely independent, the overall p value for this RCT would change from .00002 to .001.

plist = c(0.997916597, 0.998464969, 0.900933333) 1 - pnorm(sum(sapply(plist, qnorm))/sqrt(length(plist))) [1] 2.007969e-05

plist = c(0.998464969, 0.900933333) #omitting "Total score" 1 - pnorm(sum(sapply(plist, qnorm))/sqrt(length(plist))) [1] 0.001334679

Carlisle discusses the general issue of non-independence on p. 7 of his article, and in the quote above he actually noted that the liver function scores in Jonas et al. were correlated. That makes it slightly unfortunate that he didn't take some kind of measures to compensate for the correlation. Leaving the raw numbers in the S1 file as if the scores were uncorrelated meant that Jonas et al.'s article appeared to be the seventh most severely problematic article in NEJM.

(* Update 2017-06-08 10:07 UTC: In the first version of this post, I wrote "it is slightly unfortunate that [Carlisle] apparently didn't spot the problem in this case". This was unfair of me, as the quote from the S1 file shows that Carlisle did indeed spot that the variables were correlated.)

Article 2

Looking further down file S1 for NEJM articles with only a few variables, I came across Glauser et al. (2010), "Ethosuximide, Valproic Acid, and Lamotrigine in Childhood Absence Epilepsy" on line 12. This has just two baseline variables for participants' IQs measured with two different instruments. The trial number is 557, which leads us to lines 10280 through 10285 of the NEJM worksheet in the S2 file. Each of the two variables has three values, corresponding to the three treatment groups.

Carlisle notes in his article (p. 7) that the p value for the one-way ANOVA comparing the groups for the second variable is misreported. The authors stated that this value is .07, whereas Carlisle calculates (and I concur, using ind.twoway.second() from the rpsychi package in R) that this should be around .0000007. Combining this p value with the .47 from the first variable, Carlisle obtains an overall (conventional) p value of .0004 to test the null hypothesis that these group differences are the result of chance.

But it seems to me that there may be a more parsimonious explanation for these problems. The two baseline variables are both measures of IQ, and one would expect them to be correlated. Inspection of the group means in Glauser et al.'s Table 2 (a truncated version of which is show above) suggests that the value for the Lamotrigine group on the WPPSI measure is a lot lower than might be expected, given that this group scored slightly higher on the WISC measure. Indeed, when I replaced the value of 92.0 with 103.0, I obtained a p value for the one-way ANOVA of almost exactly .07. Of course, there is no direct evidence that 92.0 was the result of a finger slip (or, perhaps, copying the wrong number from a printed table), but it certainly seems like a reasonable possibility. A value of 96.0 instead of 92.0 would also give a p value close to .07.

xsd = c(16.6, 14.5, 14.8)

xn = c(155, 149, 147)

an1 = ind.oneway.second(m=c(99.1, 92.0, 100.0), sd=xsd, n=xn)

an1$anova.table$F[1] [1] 12.163

1 - pf(q=12.163, df1=2, df2=448)

[1] 7.179598e-06

an2 = ind.oneway.second(m=c(99.1, 103.0, 100.0), sd=xsd, n=xn)

an2$anova.table$F[1]

[1] 2.668

1 - pf(q=2.668, df1=2, df2=448)

[1] 0.0704934

It also seems slightly strange that someone who was fabricating data would choose to make up this particular pattern. After having invented the numbers for the WISC measure, one would presumably not add a few IQ points to two of the values and subtract a few from the other, thus inevitably pushing the groups further apart, given that the whole point of fabricating baseline IQ data would be to show that the randomisation had succeeded; to do the opposite would seem to be very sloppy. (However, it may well be the case that people who feel the need to fabricate data are typically not especially intelligent and/or statistically literate; the reason that there are very few "criminal masterminds" is that most masterminds find a more honest way to earn a living.)

Article 3

Continuing down the NEJM worksheet in the S1 file, I came to Heydendael et al. (2003) "Methotrexate versus Cyclosporine in Moderate-to-Severe Chronic Plaque Psoriasis" on line 33. Here there are three baseline variables, described on lines 3152 through 3157 of the NEJM worksheet in the S2 file. These variables turn out to be the patient's age at the start of the study, the extent of their psoriasis, and the age at which psoriasis first developed, as shown in Table 1, which I've reproduced here.

Carlisle apparently took the authors at their word that the numbers after the ± symbol were standard errors, as he seems to have converted them to standard deviations by multiplying them by the square root of the sample size (cells F3152 through F3157 in the S2 file). However, it seems clear that, at least in the case of the ages, the "SEs" can only have been SDs. The values calculated by Carlisle for the SDs are around 80, which is an absurd standard deviation for human ages; in contrast, the values ranging from 12.4 through 14.5 in the table shown above are quite reasonable as SDs. It is not clear whether the "SE" values in the table for the psoriasis area-and-severity index are in fact likely to be SDs, or whether Carlisle's calculated SD values (23.6 and 42.8, respectively) are more likely to be correct.

Carlisle calculated an overall p value of .005959229 for this study. Assuming that the SDs for the age variables are in fact the numbers listed as SEs in the above table, I get an overall p value of around .79 (with a little margin for error due to rounding error on the means and SDs, which are given to only one decimal place).

xn = c(43, 42)

an1 = ind.oneway.second(m=c(41.6, 38.3), sd=c(13.0, 12.4), n=xn)

an1$anova.table$F[1]

[1] 1.433

1 - pf(q=1.433, df1=1, df2=83)

[1] 0.2346833

an2 = ind.oneway.second(m=c(25.1, 24.3), sd=c(14.5, 13.3), n=xn) an2$anova.table$F[1] [1] 0.07

1 - pf(q=0.07, df1=1, df2=83)

[1] 0.7919927 1 - pf(q=0.07, df1=1, df2=83)

# Keep value for psoriasis from cell P33 of S1 file

plist = c(0.2346833, 0.066833333, 0.7919927) 1 - pnorm(sum(sapply(plist, qnorm))/sqrt(length(plist))) [1] 0.7921881

The fact that Carlisle apparently did not spot this issue is slightly ironic given that he wrote about the general problem of confusion between standard deviations and standard errors in his article (pp. 5–6) and also included comments about possible mislabelling by authors of SDs and SEs in several of the notes in column A of the S1 spreadsheet file.

Conclusion

The above analyses show how easy it can be to misinterpret published articles when conducting systematic forensic analyses. I can't know what was going through Carlisle's mind when he was reading the articles that I selected to check, but having myself been through the exercise of reading several hundred articles over the course of a few evenings looking for GRIM problems, I can imagine that obtaining a full understanding of the relations between each of the baseline variables may not always have been possible.

I want to make it very clear that this post is not intended as a "debunking" or "takedown" of Carlisle's article, for several reasons. First, I could have misunderstood something about his procedure (my description of it in this post is guaranteed to be incomplete). Second, Carlisle has clearly put a phenomenal amount of effort—thousands of hours, I would guess—into these analyses, for which he deserves a vast amount of credit (and does not deserve to be the subject of nitpicking). Third, Carlisle himself noted in his article (p. 8) that is was inevitable that he had made a certain number of mistakes. Fourth, I am currently in a very similar line of business myself at least part of the time, with GRIM and the Cornell Food and Brand Lab saga, and I know that I have made multiple errors, sometimes in public, where I was convinced that I had found a problem and someone gently pointed out that I had missed something (and that something was usually pretty straightforward). I should also point out that the quotes around the word "bombshell" in the title of this post are not meant to belittle the results of Carlisle's article, but merely to indicate that this is how some media outlets will probably refer to it (using a word that I try to avoid like the plague).

If I had a takeaway message, I think it would be that this technique of examining the distribution of p values from baseline variable comparisons is likely to be less reliable as a predictor of genuine problems (such as fraud) when the number of variables is small. In theory the overall probability that the results are legitimate and correctly reported is completely taken care of by the p values and Stouffer's formula for combining them, but in practice when there are only a few variables it only takes a small issue—such as a typo, or some unforeseen non-independence—to distort the results and make it appear as if there is something untoward when there probably isn't.

I would also suggest that when looking for fabrication, clusters of small p values—particularly those below .05—may not be as good an indication as clusters of large p values. This is just a continuation of my argument about the p value of .07 (or .0000007) from Article 2, above. I think that Carlisle's technique is very clever and will surely catch many people who do not realise that their "boring" numbers showing no difference will produce p values that need to follow a certain distribution, but I question whether many people are fabricating data that (even accidentally) shows a significant baseline difference between groups, when such differences might be likely to attract the attention of the reviewers.

To conclude: One of the reasons that science is hard is that it requires a lot of attention to detail, which humans are not always very good at it. Even people who are obviously phenomenally good at it (including John Carlisle!) make mistakes. We learned when writing our GRIM article what an error-prone process the collection and analysis of data can be, whether this be empirical data gathered from subjects (some of the stories about how their data were collected or curated that were volunteered by the authors whom we contacted to ask for their datasets were both amusing and slightly terrifying) or data extracted from published articles for the purposes of meta-analysis or forensic investigation. I have a back burner project to develop a "data hygiene" course, and hope to get round to actually writing and giving it one day!

This is a great post, Nick. And I agree that Carlisle did an amazing amount of work for this paper.

ReplyDeleteYou mentioned the non-independence of some of these baseline measures. Here is a quick R simulation that shows that even if the null is true, but the baseline measures are correlated, the p-values from Stouffer's method will not follow a uniform distribution. Which I guess makes sense, since one of the assumptions of this method is that the combined p-values are from independent test. I am not sure how careful these baseline measures were screened, but it is possible that a perfectly harmless (non-fraudulent) result could be flagged as being highly significant, because of non-independence of the baseline measure, at a rate that is larger than the nominal threshold.

#R code below

baselinefraud <- function(n,a) {

u <- rnorm(n,0,1)

x1 <- a*u + rnorm(n,0,sqrt(1-a^2))

x2 <- a*u + rnorm(n,0,sqrt(1-a^2))

tr <- rbinom(n,1,.5)

x1p <- t.test(x1~tr)$p.value

x2p <- t.test(x2~tr)$p.value

plist <- c(x1p,x2p)

#stouffers p

sp <- 1-pnorm(sum(sapply(plist,qnorm))/sqrt(length(plist)))

return(c(n,a,sp))

}

library(plyr)

library(ggplot2)

res <- data.frame(raply(50000,baselinefraud(100,round(runif(1,-.04,.94),1)),.progress = "text"))

names(res) <- c("N","a","p")

ggplot(res,aes(p)) + geom_histogram(binwidth = .02) + facet_wrap(~a)

Hello Nick,

ReplyDeleteyou emphasise the difficulty in getting the details correct, and I fear Muphry is in the building...

You give a list of p-values:

plist = c(.95, .84, .43, .75, .92, .87, .83, .79, .78, .84)

and say

"...we might wonder why all but [glaring omission] of them are above .50."

I misread that the first time as "...all of them are above .50.", and thought "They patently are not.". It's obviously a typo (or a lack of typing), but it's one of those minor errors that can lead to an ovaceous physiognomy.

Happy editing!

Ha! I actually spotted that a couple of hours ago, but only partly edited it. Fixed now, thanks!

DeleteThanks very much for this. It is a serious matter to accuse people of fraud, so the evidence needs to be scrutinised carefully. Everyone should be grateful to you for making a start.

ReplyDeleteI've become quite suspicious of results that are based only on p values. The problem is, of course, that we never know prior probabilities. But it would be very alarming indeed if the prior probability if fraud were as high as 0.5. Presumaby it is much smaller than that. For example if 2% of studies were fraudulent. then, even observing P = 0.0001 would give a false positive rate of just under 5% (R script and assumptions can be found in http://biorxiv.org/content/early/2017/06/04/144337 )

Great post!

ReplyDeleteCould you elobarate on the problem of multiple testing in Carlisle´s paper? He has tested several papers, in how many is the significant p-value estimated to be due to chance?

One other big think is that I am not sure about the validation of this method. It has been shown accurately spot fradulent data in reserachers with all of their papers having low p-values. Thus what is the PPV and NPV of this method?

I haven't thought about the multiple testing problem yet. Again, my aim here is not to examine every aspect of Carlisle's approach, but rather to show that the issues may be less catastrophic than they might appear, once you open the individual articles.

DeleteThanks for this excellent post.

ReplyDeleteYes. The fact that baseline covariates are generally correlated (sex and height is a simple example) means that methods for combining P-values as if independent are invalid. Carlisle notes the problem but does not really address it in my view.

Second, very few trials (almost no large ones)are completely randomised. Permuted sub-centre blocks are common within pharma and miminimsation ex-pharma. Since differences between centres are common and since the whole point of minimisation is to reduce the imbalance of covariates, this means that the expected value of the ratio of the between sum of squares to the within sum of squares will be less than one. (See discussion in my paper Added values http://onlinelibrary.wiley.com/doi/10.1002/sim.2074/abstract ) Hence abnormally high P-values may be expected when testing baseline balance.

Again Carlisle notes the permuted blocks issue but I don't think he really appreciates the problem. He excludes stratified trials but does not seem to appreciate that (nearly) all multi-centre trials are stratified by centre. Covariates simple do not vary randomly from centre to centre. A good example is given by the BHAT study which Frank Harrell and I commented on some years ago. See https://www.ncbi.nlm.nih.gov/pubmed/9253383

I certainly agree with you that we all make errors in analysis and can confess to quite a few.

So, kudos indeed to Carlisle but the lesson for the clinical trial commentators is, if you are not a statistician, best to contact one first.

Nice work.

ReplyDeleteKudos for all this work done. Which will doubtlessly gain in appreciation as time goes by

Thanks for the post and comments. I had not intended people to think that I was trying to identify fraudulent articles with this paper, although that might ultimately be one of a number of consequences. My intention was to assess how this test of baseline data behaves when applied to a large number of RCTs. As a number of commentators have noted, the assumptions behind the test are bound to be incorrect in many cases: the assumption of a normal distribution; the assumption of independence; the assumption that recruitment, which takes time, doesn't interact with time-varying factors [which will cause means to be more similar than the SD and participant number would indicate], whether or not block allocation has exacerbated the effect.

ReplyDeleteOnce the method has calculated a small p value the next question to answer is "why?" And there have been some excellent observations about possible causes. My first suspicion is I've made an error and I've found errors here and there, most of which are inconsequential but some of which will have caused a small p value, which is great because we can dispense with worrying about other causes.

Nick and others have wondered why I didn't adjust for 'obvious' errors, such as SEM being SD (& vice versa), or a missed decimal place etc. The reason is because I'd have had to applied my personal judgement, which in most cases would have been the same as your sensible judgement, but occasionally we'd disagree. So I applied the method 'dumbly', trying to copy the numbers as presented in the trial. Yes, the next step would be to say "Aha, the cause of this small p value is a typographical error". The reason for separating the initial 'dumb' calculation from the obvious explanation is so that we can log the rate of human error, to which we're all prone, and in so doing we may have a window on error rates to things that matter more than baseline variables, such as results.

I anticipate that most of the RCTs with 'small p values' will be due to: my mistakes; authors' honest mistakes; type setters mistakes; editors' mistakes; correlation; non-normal distributions; time-varying baseline variables; stratification 'overflow'. I think that, perhaps, we might also detect some signals of data fabrication, and I think that these will depend upon looking at multiple RCTs from author groups, as most won't generate really extreme p values. And of course, we'd have to check that we can't explain unusual patterns with any of the mechanisms we've outlined above, and even then the first stage would be confidential enquiries following a standard protocol, which is usually that stipulated by the Committee on Publication Ethics (COPE).

The editorial that accompanied my paper discusses some of these issues. It is a very interesting question, how journals might work together to protect the integrity of the science they publish, the patients and the scientists and the public they serve, whilst still encouraging and harnessing academic research.

Anyhow, thanks for the comments and please do keep looking at the appendices and think how we might improve these methods. John.

By the way, the "Data fabrication" in the title refers to the RCTs in the survey that we know were fabricated and that also generated small p values. Some thought that I was stating that all 5087 RCTs, but I was not. I was just stating the data fabrication is one reason for this method generating small p values.

ReplyDeleteI thought that you might like to check my clunky code for errors, or use it as you see fit, or suggest improvements. It is a bit too long for one post...

ReplyDelete#This is part one of two parts of code

require("MBESS")

require("binom")

require("rpsychi")

require("BSDA")

JAMA_trials <- as.data.frame(JAMA_trials)

MaxC1 <- NA

Maxtrial <- max(JAMA_trials$trial)

for(c in 1:Maxtrial){

C1 <- which(JAMA_trials[,1]==c)

Maxmeasure <- max(JAMA_trials[C1,2])

MaxC1[c] <- Maxmeasure

}

PJAMAtrial <- matrix(data=NA,nrow=Maxtrial,ncol=max(JAMA_trials$measure,na.rm=T))

ANOVAP <- matrix(data=NA,nrow=Maxtrial,ncol=max(JAMA_trials$measure,na.rm=T))

PJAMAtrialsd <- matrix(data=NA,nrow=Maxtrial,ncol=max(JAMA_trials$measure,na.rm=T))

for(r in 1:Maxtrial){

PPmean <- PPmeansd <- NA

length(PPmean) <- max(JAMA_trials$measure,na.rm=T)

length(PPmeansd) <- max(JAMA_trials$measure,na.rm=T)

for(s in 1:MaxC1[r]){

Row <- apply(JAMA_trials,1,function(row) any(row[1]==r & row[2]==s))

m <- 15000

Length <- length(which(Row))

Lines <- which(Row)

Sample <- Samplesd <- rep(1:Length)

DiffSample <- DiffSamplesd <- rep(1:m)

Value <- rep(1:Length)

Participants <- rep(1:Length)

Standard <- rep(1:Length)

for(j in 1:Length){

cum <- JAMA_trials[Lines[j],4]*JAMA_trials[Lines[j],5]

people <- JAMA_trials[Lines[j],4]

Value[j] <- cum

SSDD <- ifelse(JAMA_trials[Lines[j],4]>300,JAMA_trials[Lines[j],6],s.u(JAMA_trials[Lines[j],6],JAMA_trials[Lines[j],4]))

cumvar <- JAMA_trials[Lines[j],4]*SSDD^2

Standard[j] <- cumvar

Participants[j] <- people

}

Meanmean <- sum(Value)/sum(Participants)

Meanvar <- sum(Standard)/sum(Participants)

Meansd <- Meanvar^0.5

MeanNo <- sum(Participants)/Length

Diff <- sum((JAMA_trials[which(Row),5]-Meanmean)^2)

Diffsd <- sum((JAMA_trials[which(Row),6]-Meansd)^2)

SEMsample <- Meansd/sqrt(MeanNo)

meansim <- NA

for(z in 1:m){

meansim <- rnorm(1,mean=Meanmean,sd=SEMsample)

for(i in 1:Length){

Sample[i] <- round(sum(rnorm(JAMA_trials[Lines[i],4],mean=meansim,sd=Meansd)/JAMA_trials[Lines[i],4]),JAMA_trials[Lines[i],7])

Samplesd[i] <- round(sd(rnorm(JAMA_trials[Lines[i],4],mean=meansim,sd=Meansd)),JAMA_trials[Lines[i],8])

}

MeanSample <- sum(Sample*Participants)/sum(Participants)

MeanSamplesd <- sum(Samplesd*Participants)/sum(Participants)

DiffS <- (Sample-MeanSample)^2

DiffSsd <- (Samplesd-MeanSamplesd)^2

DiffSample[z] <- sum(DiffS)

DiffSamplesd[z] <- sum(DiffSsd)

}

PP1 <- sum(DiffSample < Diff)/m

PPsd1 <- sum(DiffSamplesd < Diffsd)/m

PP2 <- sum(DiffSample <= Diff)/m

PPsd2 <- sum(DiffSamplesd <= Diffsd)/m

PPmean[s] <- (PP1+PP2)/2

PPmeansd[s] <- (PPsd1+PPsd2)/2

}

PJAMAtrial[r,] <- PPmean

PJAMAtrialsd[r,] <- PPmeansd

}

Please use R markdown and publish it, along with the results. �� (publish on Rpubs, it's a easy!)

DeleteAnd here is part 2.

ReplyDeleteI used a csv named JAMA_trials, just because I was last using it for RCTs from JAMA (and because the resultant p values were PJAMA). You could of course change the name throughout the code.

The 1-sided p values for individual variables in each RCT are contained in MinP.

The 1-sided p values for RCTs are contained in RCTmixpnorm (combining p values for individual variables with sum of z).

The six different ways of combining individual variable p values, including sum of z, are in JAMApvar.

#p value from summary data ANOVA

require("CarletonStats")

ANOVAtable2 <- NA

ANOVAP2 <- matrix(data=NA,nrow=Maxtrial,ncol=max(JAMA_trials$measure,na.rm=T))

for(r in 1:Maxtrial){

for(s in 1:MaxC1[r]){

Row <- apply(JAMA_trials,1,function(row) any(row[1]==r & row[2]==s))

Lines <- which(Row)

ANOVAtable2 <- invisible(anovaSummarized(JAMA_trials[Lines,4],JAMA_trials[Lines,5],JAMA_trials[Lines,6]))

ANOVAP2[r,s] <- 1-(as.numeric(ANOVAtable2[8]))

}

}

#p value from summary data t test

require("BSDA")

MaxC1 <- NA

Maxtrial <- max(JAMA_trials$trial)

for(c in 1:Maxtrial){

C1 <- which(JAMA_trials[,1]==c)

Maxmeasure <- max(JAMA_trials[C1,2])

MaxC1[c] <- Maxmeasure

}

TTESTP <- matrix(data=NA,nrow=Maxtrial,ncol=max(JAMA_trials$measure,na.rm=T))

for(r in 1:Maxtrial){

for(s in 1:MaxC1[r]){

Row <- apply(JAMA_trials,1,function(row) any(row[1]==r & row[2]==s))

Lines <- which(Row)

ifelse(length(Lines)>2,TTESTP[r,s]<-NA,TTESTP[r,s]<-1-(tsum.test(JAMA_trials[Lines[1],5],JAMA_trials[Lines[1],6],JAMA_trials[Lines[1],4],JAMA_trials[Lines[2],5],JAMA_trials[Lines[2],6],JAMA_trials[Lines[2],4])$p.value))

}

}

MinP <- matrix(data=NA,nrow=Maxtrial,ncol=max(JAMA_trials$measure,na.rm=T))

choice <- NA

for(r in 1:Maxtrial){

for(s in 1:MaxC1[r]){

choice <- c(PJAMAtrial[r,s],ANOVAP2[r,s],TTESTP[r,s])

MCP <- (PJAMAtrial[r,s]-0.5)^2

ANP <- (ANOVAP2[r,s]-0.5)^2

TTP <- (TTESTP[r,s]-0.5)^2

near0.5 <- which.min(c(MCP,ANP,TTP))

MinP[r,s] <- choice[near0.5]

}

}

#Stouffer's method which is sumz

RCTmixpnorm <- NA

MinP <- as.data.frame(MinP)

for(k in 1:length(MaxC1)){

p <- NA

z <- NA

z <- which(!is.na(MinP[k,]))

p <- as.numeric(MinP[k,z])

erf <- function(x) 2 * pnorm(2 * x/ sqrt(2)) - 1

erfinv <- function(x) qnorm( (x+1)/2 ) / sqrt(2)

pcomb <- function(p) (1-erf(sum(sqrt(2) * erfinv(1-2*p))/sqrt(2*length(p))))/2

pl <- NA

pl <- length(p)

RCTmixpnorm[k] <- pcomb(p)

}

#logit mean Wilkinson sumlog sum and sum z methods

require("metap")

JAMApvar <- matrix(data=NA,nrow=Maxtrial,ncol=6)

measures2 <- which(MaxC1>1)

measures4 <- which(MaxC1>3)

for(k in 1:Maxtrial){

z <- which(!is.na(MinP[k,]))

p <- as.numeric(MinP[k,z])

JAMApvar[k,1] <- logitp(p)$p

JAMApvar[k,3] <- minimump(p)$p

JAMApvar[k,4] <- sumlog(p)$p

JAMApvar[k,5] <- sump(p)$p

JAMApvar[k,6] <- sumz(p)$p

}

for(k in measures4){

z <- which(!is.na(MinP[k,]))

p <- as.numeric(MinP[k,z])

JAMApvar[k,2] <- meanp(p)$p

}

This web app uses Stouffer's method to combine p values , can be used to reproduce Carlisle's analysis, might be helpful for those who are uncomfortable with using R

ReplyDeletehttps://medstats.github.io/stouffer.html

Interesting clarifications from John Carlisle.

ReplyDeleteI would like to pick up on the time varying covariates point.

1) This is irrelevant if simple randomisation is used since recruitment to A or B of patients over time will be random

2) However, in practice this method is almost never used and both permuted blocks (the usual pharmaceutical industry approach) and minimisation (very common in MRC and EORTC trials, for example) will balance time effects and this will, as RA Fisher taught us, a) be eliminated from the between treatment sums of squares but b) added to the residual sum of squares, hence invalidating the F-test

3) However, this is not the only possible explanation for the standard F-test to work. If trials are blocked by centre, which is very common, then even if there are no time trend effects per se but between centre differences, which can be and often are important, the F test will be invalid.

However, Carlisle's interesting analysis raises another issue. Suppose it were the fact that differences between centres were the explanation for the excess of 'too good to be true balance'. This would imply that any analysis of outcomes that did not condition on centre would be (strictly speaking) invalid. Within the pharma industry fitting centre effect is very common. Ex-pharma it is relatively rare, even to the extent that Marvin Zelan (in a paper with Zheng), who was familiar with the public health literature but not the pharma literature thought that it was hardly ever done. In fact it is so often done in pharma, that there is a huge literature on Type II versus Type III sums of squares to which pharma statisticians have contributed. So one extremely interesting thing that Carlisle's analysis raises is that even if there is no or hardly any fraud the analysis of outcomes may often be inappropriate.

Of course, standard pharma analysis would, as I say deal with this. Standard MRC & EORTC analysis would not. On the other hand pharma regularly use sub-centre permuted blocks and if there are trend effects across these, this would not be dealt with unless the blocks were fitted. This, as far as I am aware, almost never happens.

REFERENCES

SENN, S. J. 2000. The many modes of meta. Drug Information Journal, 34, 535-549.

SENN, S. J. 2004. Added Values: Controversies concerning randomization and additivity in clinical trials. Statistics in Medicine, 23, 3729-3753.

ZHENG, L. & ZELEN, M. 2008. MULTI-CENTER CLINICAL TRIALS: RANDOMIZATION AND ANCILLARY STATISTICS. Annals of Applied Statistics, 2, 582-600.